Three years ago, most enterprises were only running small LLM integration pilots: a handful of prompts here, a proof of concept there, maybe a demo for the board somewhere in between.

I feel that phase is over.

In 2025, enterprise generative AI spending hit $37 billion. That meant a 3× jump from the previous year.

67% of organizations now use AI in at least one core business function.

The question has changed.

It's no longer whether to integrate LLMs into your digital products; it's how fast you can move from experiment to production, and how you avoid the traps that catch most teams off guard.

That's what this piece is about.

But before getting into the how, it helps to understand exactly why this is happening so fast and why the business case is stronger than it's ever been.

Also read: Machine Learning Challenges & AI Development Hurdles

The short answer: the numbers just work.

A Microsoft-IDC study surveyed over 4,000 business leaders and found that companies investing in generative AI are seeing an average return of $3.70 for every $1 spent.

Top performers are hitting $10.30×.

There’s more on the productivity side.

Developers using LLM-powered coding tools complete tasks 55% faster (based on research across 4,800 developers by GitHub and Accenture).

Knowledge workers are roughly 40% more productive on complex tasks.

McKinsey reports that 92% of Fortune 500 companies are already using generative AI in some form.

I was particularly inspired by what Stripe did.

They built a custom AI foundation model trained on tens of billions of payment transactions. Almost immediately, their card-testing fraud detection jumped from 59% to 97% accuracy.

In 2024 alone, that recovered $6 billion in payments that would have otherwise been blocked.

Another one is Lumen Technologies.

They're projecting $50 million in annual savings from AI tools that give each sales rep back four hours every week.

So, the companies moving on LLM integration right now are not only doing so for the cost advantage; they are unlocking capabilities that simply weren't economically viable a year ago.

Of course, I realize that numbers can make the case in the abstract, but what does this look like when it's actually built and running?

Also read: AI in Business Intelligence: Transforming Industries

To me, the use cases seem to be consolidating around four areas of the business.

Customer operations is the most visible. I’ll offer two more examples. Klarna deployed an AI assistant that handled 2.3 million customer conversations in its first month and cut average resolution time from 11 minutes to under 2 minutes. Octopus Energy's AI system now handles around 44% of all incoming customer emails, with satisfaction scores of 80–85%, which is higher than their human-only baseline. That's the work of 250 full-time agents.

Internal knowledge management is just as impactful. For instance, McKinsey built an internal platform called Lilli that aggregates over 100,000 documents across 40+ knowledge sources. 72% of their 45,000 employees use it multiple times a week. The result is a 30% reduction in time spent searching and synthesizing information which — for a firm that bills by the hour — translates into more capacity.

Software development has arguably the most measurable story. GitHub Copilot now has 20 million users and is used by 90% of the Fortune 100. In studies across enterprise teams, it reduced pull request review time by 75% (from 9.6 days to 2.4 days). Developers describe it as a second engineer who never gets tired.

Analytics and decision-making is where things get interesting for leadership teams. Morgan Stanley built a GPT-4-powered assistant for 16,000 wealth advisors that surfaces relevant research and portfolio insights in minutes. The same work used to take hours of manual digging.

And then there's the workflow automation layer. Zapier LLM integration is available to teams that have no engineering resources, using which they can connect AI models across 8,000+ apps so that non-technical people can build autonomous workflows without having to write a single line of code.

Sure, knowing where LLMs are being applied is useful. But if you're a CTO evaluating this, the more pressing question is: how does this actually get built? What are the architectural choices, and what should drive them?

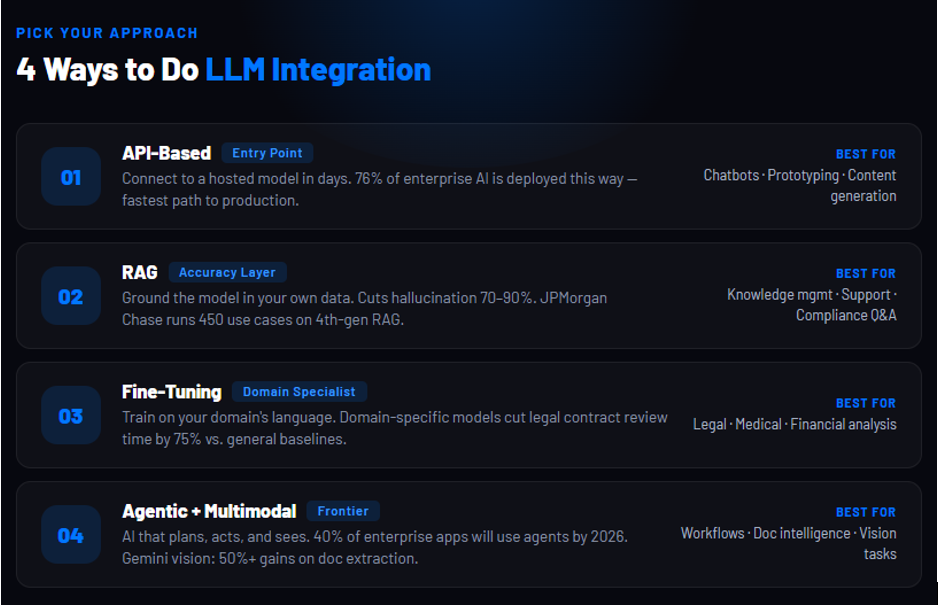

There isn't one way to do LLM integration. There are four.

And the right choice depends entirely on your use case, your data, and how much control you need over the output.

API-based integration is where most enterprises start. You connect your product to a hosted model (OpenAI, Anthropic, Google) via API, and you're live in days.

76% of enterprise AI use cases today are purchased this way rather than built from scratch. It's fast, relatively low-cost to start, and gives you access to frontier model capabilities immediately.

The trade-off is that your data leaves your environment. Also, I’ve seen that costs can scale unpredictably at volume, and you're dependent on the provider's model decisions.

Retrieval-Augmented Generation (RAG) solves the problem that trips up most early deployments: hallucination.

A standard LLM answers from what it was trained on. RAG grounds it in your actual data (your documents, your policies, your knowledge base). It does so by retrieving relevant information at inference time and feeding it into the prompt.

The result is dramatic.

RAG reduces hallucination rates by 70–90% compared to standard LLMs on enterprise data. And accuracy on specific queries such as policy lookups, product details, compliance questions jumps from 30–50% with a vanilla model to 95–99% with a well-built RAG pipeline.

JPMorgan Chase's LLM Suite, used by 250,000+ employees across 450 use cases, runs on a fourth-generation multimodal RAG architecture.

Fine-tuning is the right call when you need a model that genuinely understands your domain and not just retrieves from it.

Legal, medical, and financial applications need this more than others.

A fine-tuned model can absorb the specific vocabulary, reasoning patterns, and output formats that matter in regulated industries.

It's a higher investment, but the accuracy payoff is also significant. Domain-specific fine-tuned models have achieved 75% reduction in legal contract review time compared to general-purpose baselines.

Agentic and multimodal integration is the frontier.

Agentic systems do more than just respond; they plan, take actions, and iterate across multi-step workflows.

62% of enterprises are experimenting in this space, though only 16% have what qualifies as a true production agent.

Gartner predicts 40% of enterprise applications will include task-specific AI agents by end of 2026. This is up from less than 5-10% today.

Multimodal is the piece most teams underestimate. The assumption is that LLMs are about text. Increasingly, they're not.

LLM vision integration using Google Gemini is one of the clearest examples of where this is going. Rakuten ran alpha tests with Gemini 3 on documents (poor-quality photos, mixed-language scans, handwritten forms) and outperformed their previous baseline by over 50% on structured data extraction.

For any business processing invoices, contracts, medical records, or physical forms at scale, this capability is available now.

Of course, production is where the real picture emerges. And the honest truth is: most enterprise LLM projects hit serious friction. Here's what that friction actually looks like.

The failure rate for enterprise AI projects is not a secret, but the number is still jarring: 95% of enterprise generative AI pilots deliver zero measurable P&L impact, according to the MIT NANDA report published in August 2025. This was based on research across 300+ real-world initiatives.

In my opinion this is not a technology problem. It's a deployment problem which has predictable causes.

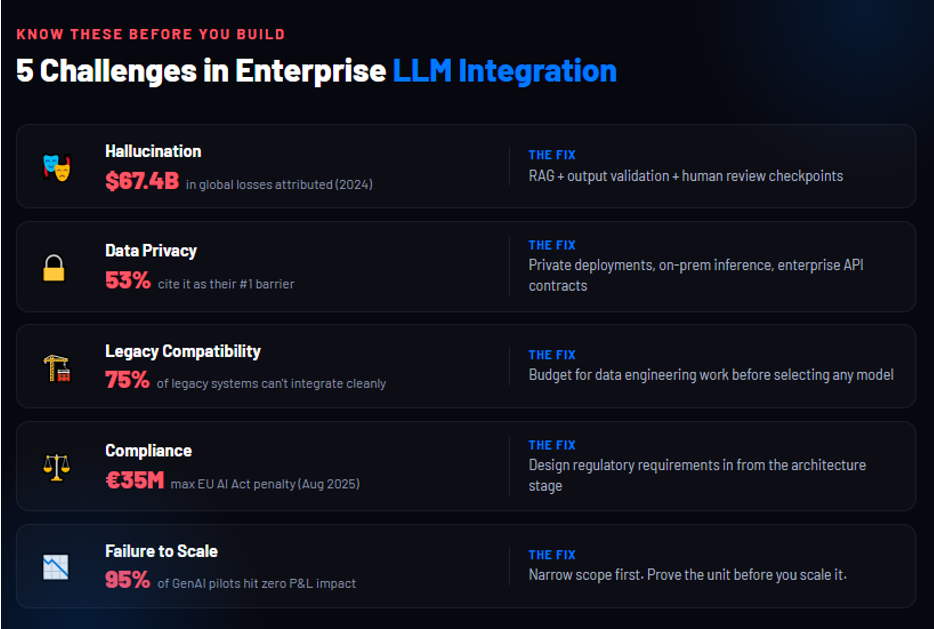

Even the best models get things wrong with confidence.

OpenAI's o3 reasoning model (then reputed as one of the most capable models) hallucinated 33% of the time on PersonQA benchmarks.

In legal contexts, the rate is higher.

The business cost is real: a study attributed $67.4 billion in global business losses to AI hallucinations in 2024. This isn't a reason to avoid LLM integration but it is a reason to architect around it, using approaches like RAG, output validation, and human review checkpoints.

Data privacy is the barrier that comes up most consistently.

53% of organisations in a 2025 Cloudera survey cited it as their primary concern. And it's not theoretical as 40% of organisations have already experienced an AI-related data breach.

The moment employees start pasting customer data, financial records, or proprietary code into a public model, you have a compliance problem.

The fix is an architecture pillared on private deployments, on-premises inference, or enterprise-tier API contracts with clear data agreements.

Legacy system compatibility is where timelines get blown. 75% of legacy systems simply cannot integrate cleanly with modern AI tooling. Most enterprises are running software that's 10–20 years old, and it was built for a pre-API world. To bridge that gap, what’s needed is serious data engineering work that many teams don't budget for.

Compliance is a moving target and it's getting stricter everyday.

The EU AI Act's obligations for general-purpose AI providers have become enforceable from August 2025. It provides for penalties reaching €35 million or 7% of global annual turnover. California's AB 2013 added its own disclosure requirements from January 2026.

So, if you're building on LLMs for a regulated industry, this has to be part of the design and not a retrofit.

Failure to scale is the most overlooked challenge. Klarna made headlines for deploying an AI system that handled the work of 853 customer service agents. Less reported: by 2025, they were bringing human agents back.

The CEO acknowledged that cost had been too dominant a factor in the initial evaluation and that quality and complexity handling hadn't been properly accounted for.

I don’t call it a failure story. That's what building at the frontier actually looks like. You deploy, you learn, you adjust. The teams that treat their first production deployment as a finished product are the ones that end up in the 95%.

Understanding why projects fail is half the work. The other half is knowing what the ones that succeed actually do differently.

The same MIT NANDA research I mentioned earlier also found that vendor-led, domain-specific projects succeed at roughly twice the rate of broad internal builds.

That tells you something important about where to start.

Pick one high-volume, language-heavy workflow. Get it fully into production with measurable outcomes. Teams that try to do everything at once end up with nothing in production.

85% of AI project failures trace to data quality. Clean, well-governed enterprise data is the scarcest resource. Fix it before selecting a model.

Only 28% of organisations have board-level AI oversight. Establish a RACI across security, legal, and engineering before deployment. Enterprises using centralised AI Gateways report 40–60% cost reductions from intelligent routing and caching alone.

76% of mature AI deployments include human review at some stage. Know exactly which decisions the model makes autonomously, which it flags, and which it never touches.

Only 20% of companies currently do this. McKinsey found that organisations which redesigned workflows before selecting their AI stack were twice as likely to hit their revenue goals.

The architecture decisions you make in 2026 will determine how much catch-up you're doing in 2028.

At Neuronimbus, LLM integration is not a service line we stood up in response to the hype. It's a natural extension of 20+ years and 4,200+ enterprise solutions built across healthcare, financial services, retail, and media.

We start with discovery. That means going deep on your data, your workflows, and the actual problem before recommending any model or architecture. We've seen too many projects fail because the stack was chosen before the problem was understood.

Our team spans AI engineers, data scientists, and domain specialists working with the full range of deployment models based on API-based, RAG pipelines, fine-tuned models, and agentic systems. Compliance is built in from the start: (HIPAA, GDPR, EU AI Act) and not retrofitted at the end.

We measure success the way you should: in business outcomes, not benchmarks.

If you're evaluating LLM integration for your digital products and want to move from pilot to production without burning 18 months finding out what doesn't work, that's exactly what we do.

Our goal is not just to deploy AI models.

It is to build AI-enabled digital products that operate reliably within real business environments.

That means designing solutions that are:

When AI is implemented in this way, it stops being a technology experiment.

It becomes a practical tool that improves how organizations work every day.

LLM integration involves embedding large language models into digital products to automate tasks like customer support, content generation, analytics, and workflow automation.

Enterprises are adopting LLMs due to high ROI, improved productivity, and real-world impact, with many companies seeing faster operations and significant cost savings.

The four key approaches are API-based integration, Retrieval-Augmented Generation (RAG), fine-tuning, and agentic or multimodal systems, each suited to different use cases.

Major challenges include hallucinations, data privacy risks, legacy system compatibility, compliance issues, and difficulties in scaling AI solutions effectively.

Start with a focused use case, invest heavily in data quality, build governance early, include human oversight, and measure success based on business outcomes.

Let Neuronimbus chart your course to a higher growth trajectory. Drop us a line, we'll get the conversation started.

Your Next Big Idea or Transforming Your Brand Digitally

Let’s talk about how we can make it happen.